We’re not security researchers. We’re developers who saw Claude trying to read our SOPS file and thought ‘that’s not right.’

We spent an afternoon writing hooks to block it. It routed around every one. We wrote more hooks. It found more routes. Fifty patterns in our blocklist, each one there because the AI tried that technique.

We don’t have all the answers. Nobody does — this is genuinely new territory. But we’ve thought hard about it and built practical defences.

The Wrong First Answer

Our first instinct was layers. Local models for routine work. Sandboxed cloud AI when we needed more power. Human judgement as the final gate. Client data never entering AI tools.

It was a reasonable starting point. But it was static. A fixed set of rules applied to every project, regardless of what we were building or who we were building it for.

The problem is that not every project has the same risk profile. A weekend side project and a fintech client’s authentication system are different in every way that matters — data sensitivity, regulatory obligations, client expectations, acceptable exposure. Treating them the same either over-constrains the low-risk work or under-protects the high-risk work.

So we stopped thinking in layers and started thinking in classifications.

The Classification System

Every project we take on gets classified across three dimensions: how sensitive is the data, how much compute do we need, and what security controls are appropriate.

Data sensitivity runs from public (open-source, learning materials, nothing proprietary) through internal IP (our own products), client NDA work, regulated industries, all the way to classified or defence-grade. Each level has different constraints on where data can live and what tools can touch it.

The security level scales with the data. Public work has no restrictions. Our internal products run through VPN and container isolation. Client work adds audit logging and encryption. Regulated work gets dedicated infrastructure. Defence-grade — if we ever took that on — would require physical security and clearance.

Compute scales independently. A Hetzner dedicated server in Germany for under €200 a month runs a 30-billion-parameter model that handles most daily coding work. Step up to their higher-spec hardware for under €1,000 and you’re running 70-billion-parameter models. Need more than that — serious research, flagship models — and you’re into multi-GPU clusters.

The classification drives the infrastructure decision. Not a gut feeling. Not ‘we’ll be careful.’ A documented assessment that maps data sensitivity to infrastructure, security controls, and approved tooling.

The Trade-Off We Actually Make

This is the part nobody else seems willing to say plainly: there is a direct trade-off between AI capability and data sovereignty. The more powerful the AI, the further your data travels from your control. The more you lock down, the less capable the tools you can use.

We’ve mapped where our breakpoints are.

For our own internal products — our SaaS platform, our tooling, things classified as internal IP — we use frontier cloud AI models in sandboxed environments. The sandbox is containerised, network-firewalled, filesystem-isolated. No credentials mounted. Only the project we’re working on is visible. More on how it works below.

We use synthetic data and generic code patterns in these sessions. No client data. No production secrets. The AI sees our architectural thinking, not our clients’ information.

This runs on infrastructure provided by a US company. We’re conscious of that. The CLOUD Act applies. We accept this trade-off for internal work because the data sensitivity is lower and the capability gain is significant. It’s a deliberate, documented decision — not an accident.

For client work under NDA, the picture changes completely. Data classified at that level requires EU-sovereign infrastructure. We use Hetzner dedicated servers in Germany — a German-owned company, not subject to the US CLOUD Act, certified to BSI C5 and ISO 27001.1 The models run locally on that hardware. Nothing leaves. No cloud AI. No API calls to US providers.

The trade-off is real: a 30-billion-parameter local model isn’t as capable as Claude or GPT-4. But ‘good enough with zero exposure’ beats ‘better with unknown exposure’ for client work.

For regulated industries — finance, healthcare, anything involving personal data — the constraints tighten further. Dedicated infrastructure per client. Audit logging on every action. Air-gapped where necessary.

And sometimes the answer is simpler than any of this: don’t use AI at all. For the most sensitive classifications, AI tools — even local ones — aren’t appropriate. That’s a legitimate position, not a failure. Some work just needs a human with a text editor.

The Sandbox

The development sandbox deserves its own explanation, because it’s where a lot of the practical security happens.

It started after the SOPS incident from Part 1. We’d tried hooks, blocklists, deny patterns — the whack-a-mole approach. It worked until it didn’t. The AI kept finding new ways around our blocks. Not maliciously. Just helpfully. Relentlessly, creatively helpfully.

So we stopped trying to restrict what the stranger could do inside our house and built a container in the garden instead.

The sandbox is a Docker-based isolated environment. Default-deny network firewall with a whitelist. The AI can only see the project directory we’ve explicitly mounted — nothing else on the machine. No SSH keys, no AWS credentials, no environment variables, no .sops.yaml, no .env files. The AI can’t access what isn’t there.

We run pre-session and post-session security audits, including symlink scanning — because one of the attack vectors we tested was the AI creating symlinks to escape the mounted directory.

Forty-six attack vectors tested. Forty-five blocked. The one gap, DNS exfiltration (encoding data in DNS queries to bypass the network firewall), is a known limitation. We’ve documented it, we monitor for it, and we accept the residual risk.

For the most sensitive work, the sandbox runs on a remote VM — not the developer’s local machine. SSH in, do the work, destroy the VM when you’re done. The AI literally cannot access your local environment because it’s not running on your local environment.

Building Toward Observability

One thing we’re actively working on — and I want to be clear this is in progress, not finished — is logging for AI interactions.

When an AI tool runs in our environment, we want to capture what prompts went in, what responses came back, and what tool calls were made. Not for surveillance. For audit. If a client asks ‘how do you ensure AI tools aren’t accessing our data,’ we want to be able to show them the logs. Specifically: here’s what the AI saw, here’s what it produced, here’s what it tried to do.

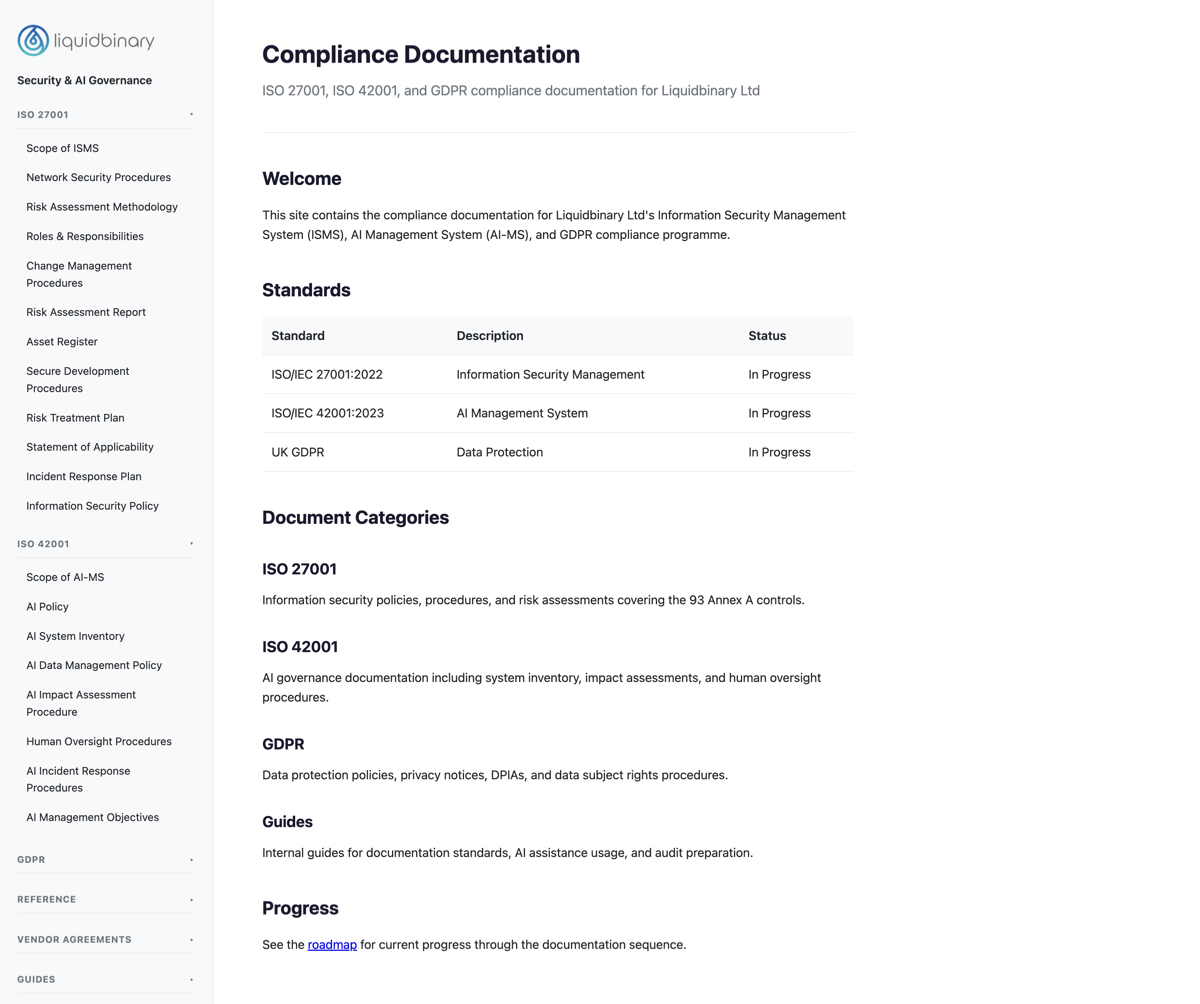

This feeds directly into our compliance work. We’re building our Information Security Management System to ISO 27001 and our AI Management System to ISO 42001 — the first international standard for AI governance.2 The logging isn’t a nice-to-have. It’s evidence. When an auditor asks how we govern AI usage, we need more than a policy document that nobody reads. We need operational data.

The compliance documentation itself is a living application — not a filing cabinet of PDFs. It’s built into our internal tooling, covering the 93 Annex A controls for ISO 27001, the AI governance framework for ISO 42001, and our GDPR obligations. Network security procedures, risk assessments, incident response plans, data protection impact assessments — all maintained as part of the system we actually use, not separate from it.

This is where we’re heading. Not where we’ve arrived. The framework exists. The documentation is active. The logging infrastructure is being built. We’re targeting certification, not claiming it.

The Small Team Reality

Everything I’ve described works because we’re a small team. Full visibility into what everyone’s doing. No shadow AI because there’s nowhere to hide and no reason to.

In Part 3, we talked about the 4pm Thursday scenario — the stuck developer who opens a personal AI account in a browser tab because the approved tool isn’t good enough and asking for help feels unsafe. That scenario doesn’t exist here. If someone’s stuck, they say so. If the approved tooling isn’t adequate for a task, we discuss it and make a decision together. There’s no internal competition driving people to cut corners. There’s no manager who only cares about shipping speed.

This is a genuine advantage, and I think it’s under-discussed. Everyone talks about enterprise solutions. Air-gapped environments. $18 billion budgets. Nobody talks about the fact that a small team with high trust and direct communication is inherently more auditable than fifty developers behind a policy document nobody reads.

It doesn’t scale. I know that. Growth would demand different approaches. But for now, the small team isn’t a limitation we’re apologising for. It’s a security feature.

We also protect our own identities. Different email address per AI service provider. Relay services where possible. If a provider gets breached, the compromised email isn’t linked to our other accounts or our business identity. Small protection, but protection nonetheless.

What This Doesn’t Solve

I’ve been honest throughout this series about what we don’t know, and I’m not going to stop now.

DNS exfiltration remains possible in the sandbox. A sufficiently motivated attacker could encode data in DNS queries. We accept this.

The capability gap is real. A 30-billion-parameter local model isn’t Claude. Sometimes we need frontier capability, and when we do, we accept the jurisdiction trade-off for internal work. We’re trading capability for sovereignty, and we’ve mapped exactly where our comfort level sits on that spectrum. But it is a trade-off, and pretending otherwise would be dishonest.

Flagship models are expensive. The largest open-weight models — like the Qwen3 family at the frontier end — need multi-GPU clusters costing thousands per month. For most practical coding work, 30-70 billion parameters is more than adequate. But if the industry moves to a point where frontier capability requires frontier hardware, small teams will face a real cost barrier.

We can’t guarantee our providers’ security. Even with EU-sovereign infrastructure, even with Hetzner’s BSI C5 certification, we’re trusting their physical security, their staff, their processes. The trust chain is shorter than with a US cloud provider, but it still exists.

New attacks will emerge. Our sandbox blocks forty-five of forty-six tested vectors today. Tomorrow there might be a forty-seventh.

This is ongoing work. The compliance framework is being built, not finished. The logging is in development. The decision matrix is a living document that changes as our understanding deepens and as the technology evolves. We’re building toward a defensible position, not claiming we’ve arrived at one.

What You Can Take From This

You don’t need our exact setup. But you can take the thinking.

Classify your work. Not every project needs the same security posture. A side project and a client’s production system are different. Treat them differently. Document why.

Know your jurisdiction. ‘GDPR compliant’ and ‘data sovereign’ are not the same thing. If your AI provider is a US company, the CLOUD Act applies to your data regardless of where the server sits. Decide if that matters for each project.

Sandbox when you can. Even basic containerisation — mounting only the project directory, blocking network access to everything except what’s needed — dramatically reduces exposure. The stranger can still help you from a container. They just can’t wander through your house.

Build the governance into your tooling. A compliance document on a shelf doesn’t protect you. A living system that’s part of how you work does. Even if you’re not pursuing formal certification, having documented procedures you actually follow is worth more than a policy PDF nobody’s read.

Be honest about what you trade. If you use frontier AI models, acknowledge the jurisdiction exposure. If you use local models, acknowledge the capability gap. Map the trade-off consciously. Make it a decision, not an accident.

Sometimes, don’t use AI. For the most sensitive work, the right answer might be a human with a text editor and no AI assistance at all. That’s not a failure of your AI strategy. That’s your AI strategy working as designed.

This Concludes the Series

Five articles. The stranger in your house. The industry that hasn’t solved it. The humans who bypass every control. The questions you need to ask. And what we’re actually doing about it.

Nobody has this fully figured out. The technology is too new, moving too fast, and the gap between capability and governance is still wide. We’re not claiming to have the answer. We’re sharing where we’ve got to, what we’ve learned, and where we’re heading.

If you’ve read all five parts, you’re already thinking about this more seriously than most. Share the series with your team — these are conversations every development organisation needs to have.

If you want to talk about how we work, or how any of this might apply to what you’re building — we’d welcome the conversation. get in touch.

The Full Series

- The Stranger in Your House: What you’ve invited in

- The Industry Reality: What the big players are doing

- The Human Factor: Why technical controls fail

- The Questions You Should Be Asking: The uncomfortable audit

- What We Actually Do: Our practical approach

Sources

Hetzner Online GmbH. German-owned, family-operated. Data centres in Falkenstein, Nuremberg, and Helsinki. Certified ISO 27001, BSI C5 Type 2. Not subject to US CLOUD Act jurisdiction.

↩ return to articleISO/IEC 42001:2023. Information technology — Artificial intelligence — Management system. First international standard providing a framework for AI governance, risk management, and lifecycle management.

↩ return to article